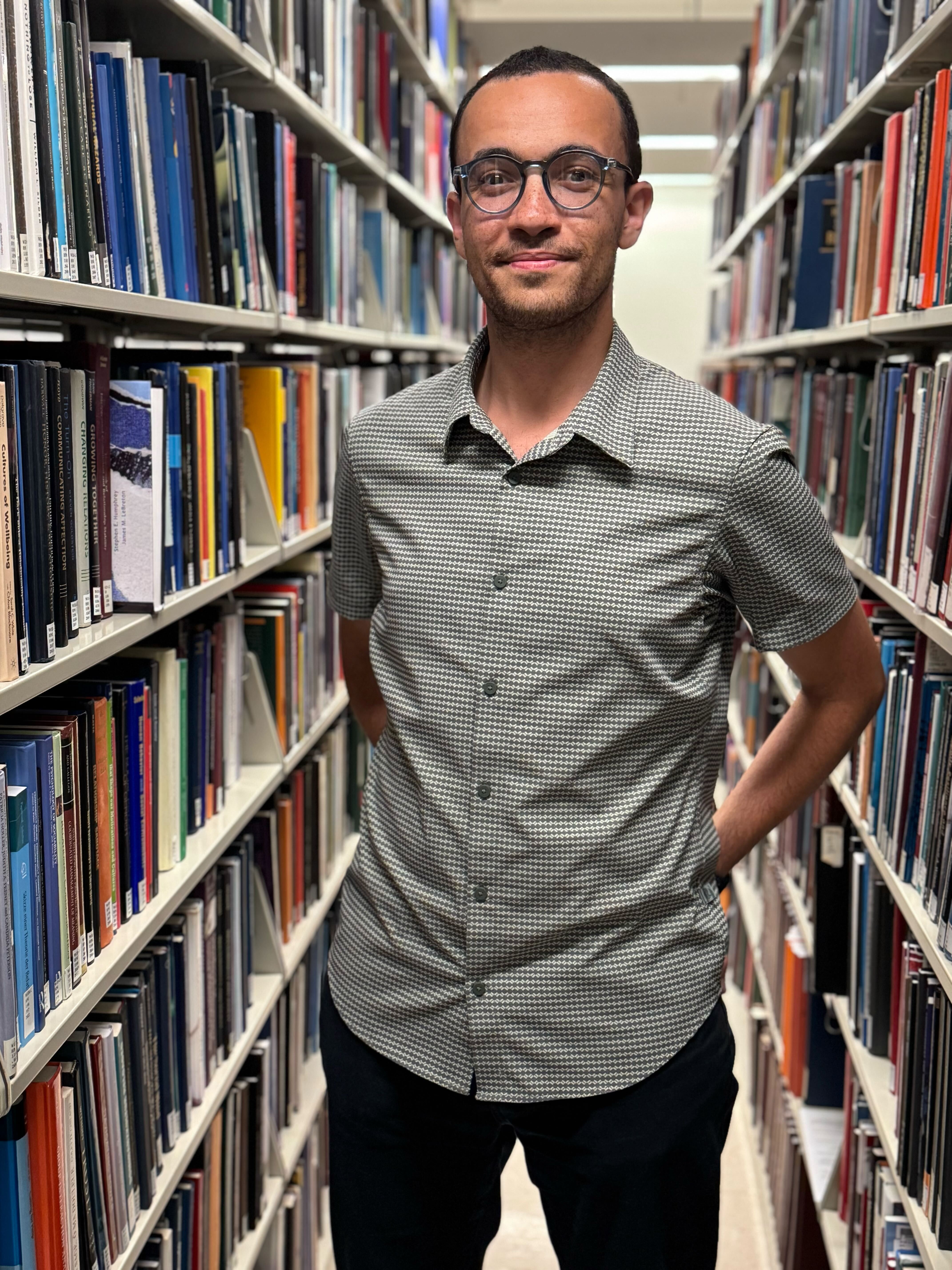

3rd year PhD Student - Harvard University

3rd year PhD Student - Harvard UniversityI study how humans perceive, understand, and produce speech. To investigate these phenomena, I build computational models that can reproduce human behavior and generate testable predictions about the perceptual and neural basis of human spoken communication.

I am currently a third-year PhD student in the Speech and Hearing Bioscience and Technology (SHBT) Program at Harvard University. I conduct my research in the Laboratory for Computational Audition at MIT, where I am advised by Josh McDermott.

I earned my B.Sc. in Systems and Biomedical Engineering from Cairo University (Egypt). During my undergraduate studies, I worked on developing computational models of motor neurons to study the early stages of ALS (Thesis).

I later completed a M.Sc. in Neuroscience and Neuroengineering at EPFL (Switzerland), where I was introduced to speech machine learning and cognitive neuroscience. As a Bertarelli fellow, I headed to HMS and MIT to do my master’s thesis in the Senseable Intelligence Group with Satrajit Ghosh on studying how humans and artificial neural network models recognize and represent voices (Thesis).

Education

-

Harvard UniversityPh.D. in Speech and Hearing, Bioscience and TechnologySep. 2023 - present

Harvard UniversityPh.D. in Speech and Hearing, Bioscience and TechnologySep. 2023 - present -

Harvard UniversityA.M. (with 4.0 GPA) in Speech and Hearing SciencesSep. 2023 - Nov. 2025

Harvard UniversityA.M. (with 4.0 GPA) in Speech and Hearing SciencesSep. 2023 - Nov. 2025 -

EPFLM.Sc. (with mention d'excellence) in Neuroscience and Neuro-engineeringSep. 2020 - April 2023

EPFLM.Sc. (with mention d'excellence) in Neuroscience and Neuro-engineeringSep. 2020 - April 2023 -

Cairo UniversityB.Sc. (with honors) in Systems and Biomedical EngineeringSep. 2015 - Aug. 2020

Cairo UniversityB.Sc. (with honors) in Systems and Biomedical EngineeringSep. 2015 - Aug. 2020

Experience

-

IDIAP Research InstituteSpeech Machine Learning InternApril 2023 - Aug. 2023

IDIAP Research InstituteSpeech Machine Learning InternApril 2023 - Aug. 2023 -

LogitechVoice AI InternSep. 2021 - Feb. 2022

LogitechVoice AI InternSep. 2021 - Feb. 2022 -

Machine Learning and Optimization LaboratoryML & Data Visualization Research AssistantMarch 2021 - Oct. 2021

Machine Learning and Optimization LaboratoryML & Data Visualization Research AssistantMarch 2021 - Oct. 2021